|

USAD, Urban Shuttles Autonomously Driven. This projects aims at the development of vehicles capable to drive autonomously in a urban setting, so to allow to increase the offer of public transportation to citizens, even in very low-demand conditions. These devices would allow reasonable costs for the service provider, and therefore for the customer, while providing a 24hours on-demand service. We believe this is the only option to support a decrease in the number of private-owned cars. Another potential application is the movimentation of goods in urban settings, so to obtain a city-wide low-cost public logistic system.This research is aimed at the development of the enabling technology, i.e., autonomous navigation, which implies, in our view, to solve the perception side of the navigation. This research activity is led by IRAlab (Università di Milano - Bicocca), and is performed together with AIRlab (Politecnico di Milano), and Info Solution S.p.A. Go to the project's page |

|

Agricoltural robotics. This projects aims at the development of vehicles capable to drive autonomously in the moderate off-road conditions typical of agricoltural fields, like olive groves or horticultural crop fields, for various tasks. Relevant examples of such tasks are de-weeding or watering. Go to the project's page |

|

Road Layout Estimation In this project we present a general framework for urban road layout estimation, altogether with a specific application to the vehicle localization problem. The localization is performed by synergically exploiting data from different sensors, as well as map-matching with cartographic maps. Go to the project's page |

|

PTAM-inspired Visual Odometry The goal of this work is the creation of nodes for the ROS environment that are able to create the map of the environment around the robot and to calculate an estimation of the position and the orientation (which union defines the pose) of the robot basing on the images received by 2 cameras mounted on the robot with a known relative position (of a camera to the other, also called baseline) and the map. In this work two nodes are used: a Mapper node which creates the map that represents the environment around the robot and a Tracker node which estimates the pose of the robot basing on the map created by the Mapper node. Go to the project's page |

|

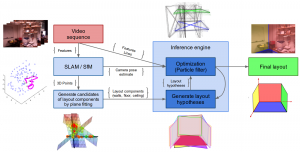

Free your Camera: 3D Indoor Scene Understanding from Arbitrary Camera Motion In this work we address the problem of estimating the 3D structural layout of complex and cluttered indoor scenes from monocular video sequences, where the observer can freely move in the surrounding space. We propose an effective probabilistic formulation that allows us to generate, evaluate and optimize layout hypotheses by integrating new image evidence as the observer moves. Compared to state-of-the-art work, our approach makes significantly less limiting hypotheses about the scene and the observer (e.g., Manhattan world assumption, known camera motion). We introduce a new challenging dataset and present an extensive experimental evaluation, which demonstrates that our formulation reaches near-real-time computation time and outperforms state-of-the-art methods while operating in significantly less constrained conditions. Go to the project's page |

|

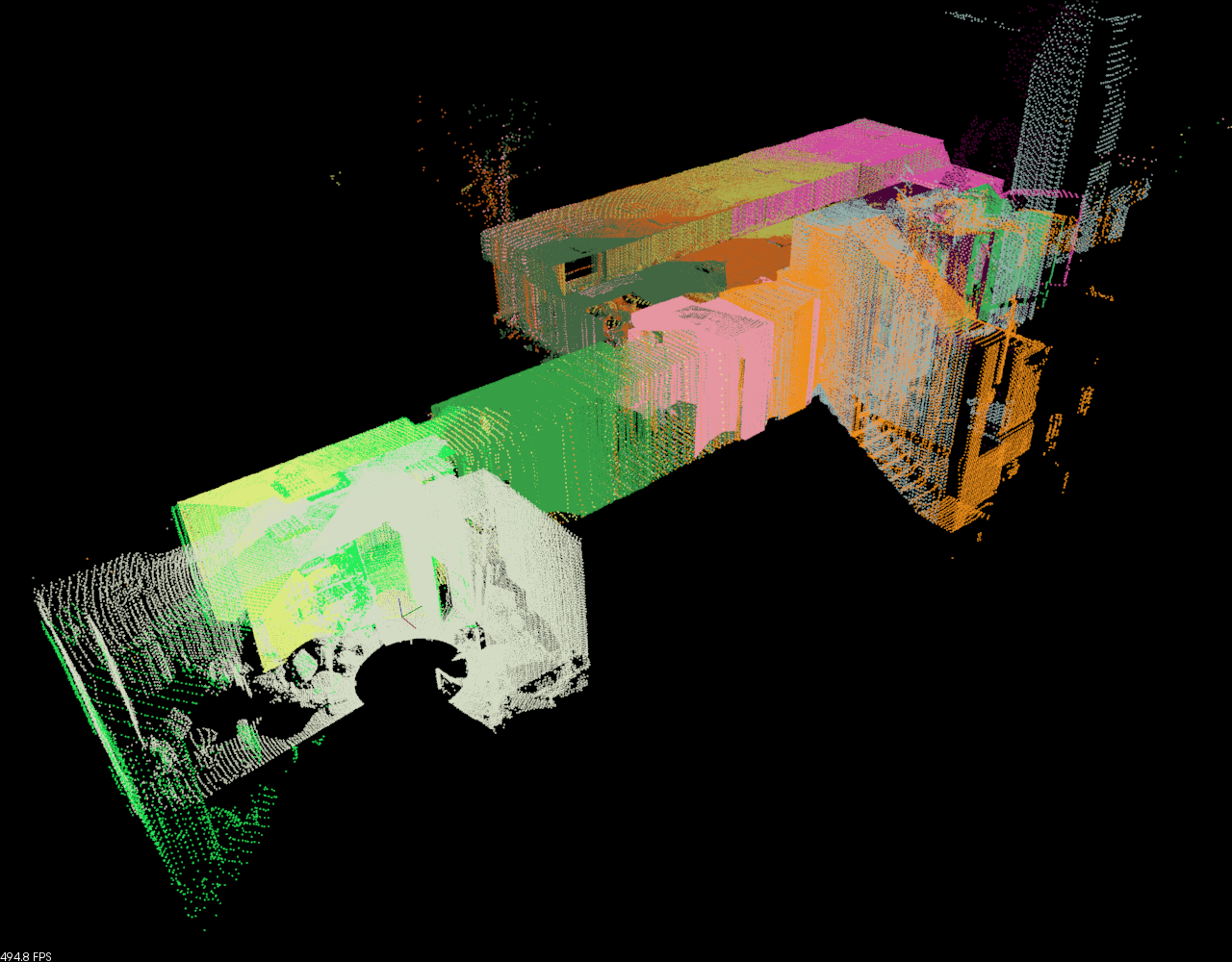

3D Mapping with laser scanners The aim of this project is to develop a system that is able to map a large area by using a standard laser scanner. This project uses an old Robuter 1 mobile base, whose control has been taken away and substituted by internally developed boards and processing. Go to the project's page |

|

Indoor autonomous navigation with object interaction The aim of this project is to develop a robot that is able to guide people and to deliver small packages within an indoor environment (using an elevator and opening doors when necessary). This project uses a Volksbot RT3 mobile base. Go to the OLD project's page Go to the NEW project's page |

IRALAB

Informatics and Robotics for Automation